Primary Resources and Services

HPC Services

ARCC staff host, maintain and support in-house HPC resources for use by the UW research community and collaborators. Learn more about how to get access to HPC resources available to you as a UW researcher.

High Performance ComputeData Services

Our team provide data storage options as a core service and some storage is available at little to no cost. Find out how to use data storage already available to you as a UW researcher.

Miscellaneous Resources and Services

Our specialized services often start as a byproduct of specific needs from a researcher. If we do not provide something you need today, we may be able to provide it tomorrow.

User Support

Our Staff works to support all research computing at UW as well as specialty resources

from outside facilities.

User Support

Highlights from ARCC Researchers

WyGISC Collaboration provides Hyper Screen Sharing to Campus & Beyond

Researchers at the School of Computing’s Wyoming Geographic Science Center (WyGISC) worked with colleagues at the Research and Economic Development’s Advanced Research Computing Center (ARCC) to provide SAGE3. “We have partnered with ARCC on various other successful projects in the past to leverage their systems and expertise such as Pathfinder and Alcova, it just made sense to for us reach out to them on this one as well,” said Nicholas Case, a Geospatial Developer at WyGISC.

SAGE3(https://sage3.sagecommons.org/) is an open-source platform for collaboration similar to a jamboard but with much

more features funded by the National Science Foundation (NSF). It also complements

video meeting tools like Zoom and Microsoft Teams and integrates Artificial Intelligence.

More specifically, SAGE3 provides researchers and students alike with the ability

to create collaboration boards that can then add several different applications and

widgets for coding, visualizations, AI chat, and more for whatever project they are

working on together.

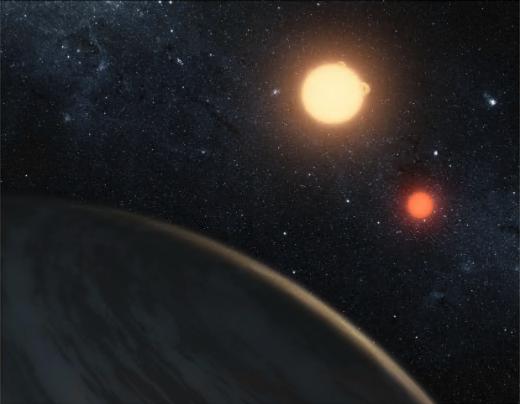

A Dance of Two Stars & A Million CPUs

By working together, a researcher at the University of Wyoming (UWyo) and the Advanced

Research Computing Center (ARCC), over a year and a half’s worth of computational

work was completed in less than three weeks.

Binary star systems (two stars gravitationally bound in orbit around each other) are one of the many phenomena found in our galaxy that seem from science fiction. Tatooine anyone? However, they are quite common in our very own Milky Way. That said, much research is still needed to classify eclipsing binary systems. One such researcher, Megan Frank, a PhD candidate in UWyo’s Department of Physics and Astronomy is attempting to do just that. As Megan describes her project, “this project aims to classify four eclipsing binary systems located in the Large Magellanic Cloud (a dwarf galaxy of the Milky way). These objects are a bit unique in that they showcase a unique reflection effect in their light curves, meaning that the space between the primary and secondary eclipse is sloped rather than flat.”

Important Links

See our policies page for information specific to the use of ARCC services and resources.

Check our News & Announcements for the latest information including maintenance, outages, and upcoming events.

Get ARCC departmental staff listings and contact information.

Documentation and Training Materials

ARCC offers a large selection of documentation and on-demand training available to users. Click the button below to view our training and documentation index.

Why use HPC?

High-Performance Computing (HPC) references using powerful computers to perform complex calculations and simulations that are typically difficult or impossible using a conventional desktop computer. HPC can offer users significant benefits in many applications. While not necessary for all projects, it is a very valuable tool for researchers who want to spend less time waiting on computations or simulations.